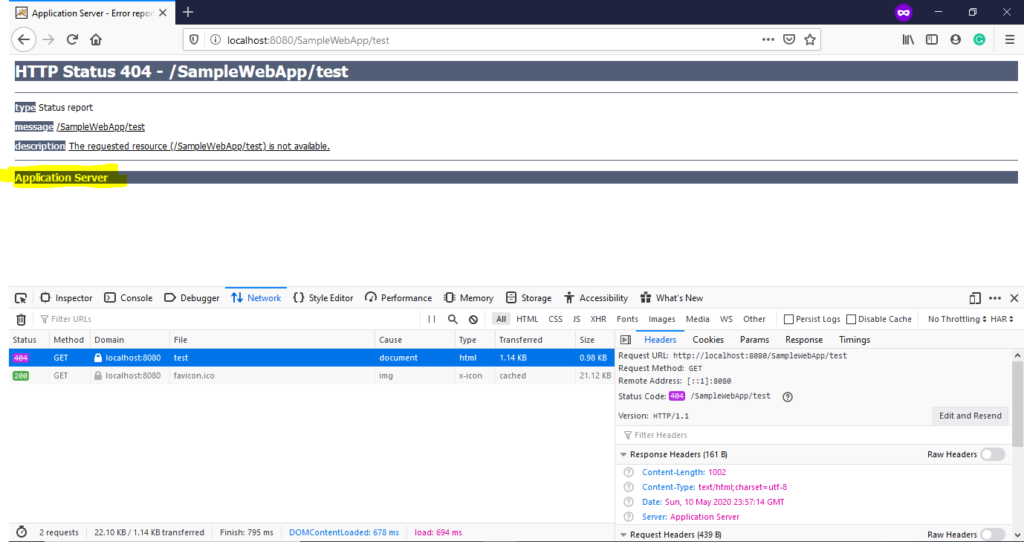

The hugely occupied subfolder appears to be /proc//task (~62M items compared to ~1.7M on the healthy system). Running find /proc | wc -l returns 62497876 (62M), which could reach some OS limit on a similar healthy system it's more like 1800000 (1.8M). Running sysctl fs.file-nr gives this result which is evidently high but seems far from the limit: ~]# sysctl fs.file-nr Running df -i doesn't hint at something bad (and is similar to a healthy system): ~]# df -iįilesystem Inodes IUsed IFree IUse% Mounted on dev/xvdc 1008G 197G 760G 19% /mnt/eternalĬontents of /etc/fstab (which, for some reason, use double mounting - not sure why): /dev/xvdc /mnt/eternal ext4 defaults 0 0Īnd appropriate lines from mount: /dev/xvdc on /mnt/eternal type ext4 (rw) Running df -h shows that the disk is evidently not full: ~]# df -hįilesystem Size Used Avail Use% Mounted on The setup is CentOS 6.5 running under AWS with the said tomcat disk mounted from an EBS volume. Some pointers and clues that I've gathered: Any suggestions on how to fix the issue and be able to mkdir again without restart? I can't explain it for the life of me, and can only resolve using init 6. In fact, I get the same error under multiple different folders that reside under /opt, but not under /opt directly, and not - for example - under /opt/apache-tomcat-7.0.52/logs. Mkdir: cannot create directory `some_folder': No space left on device opt/apache-tomcat-7.0.52]# mkdir some_folder

However my question is about a bizarre phenomenon that accompanies this situation: I cannot mkdir inside the tomcat folder.

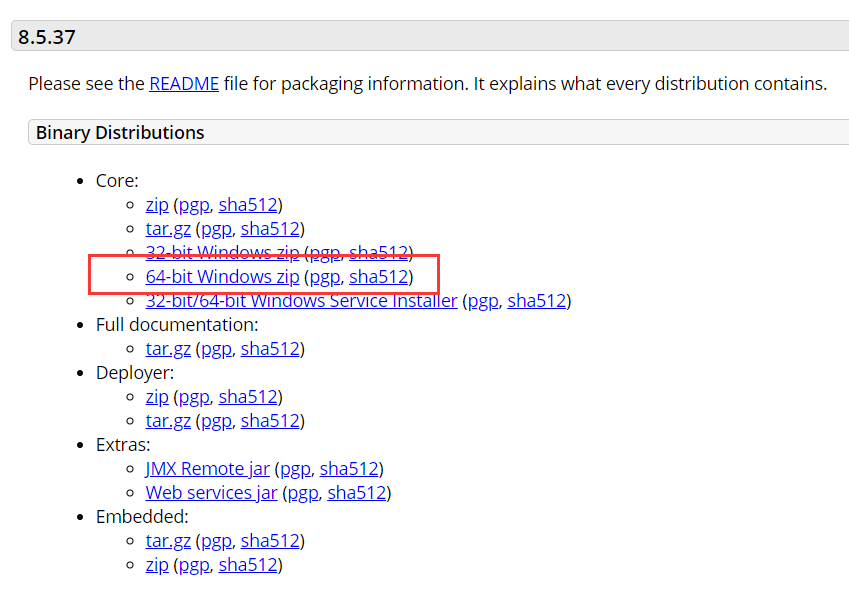

When this happens, the java appears to still be alive, but we can no longer access it. I have a tomcat running a java application that occasionally accumulates socket handles and reaches the ulimit we configured (both soft and hard) for max-open-files, which is 100K.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed